You decide...

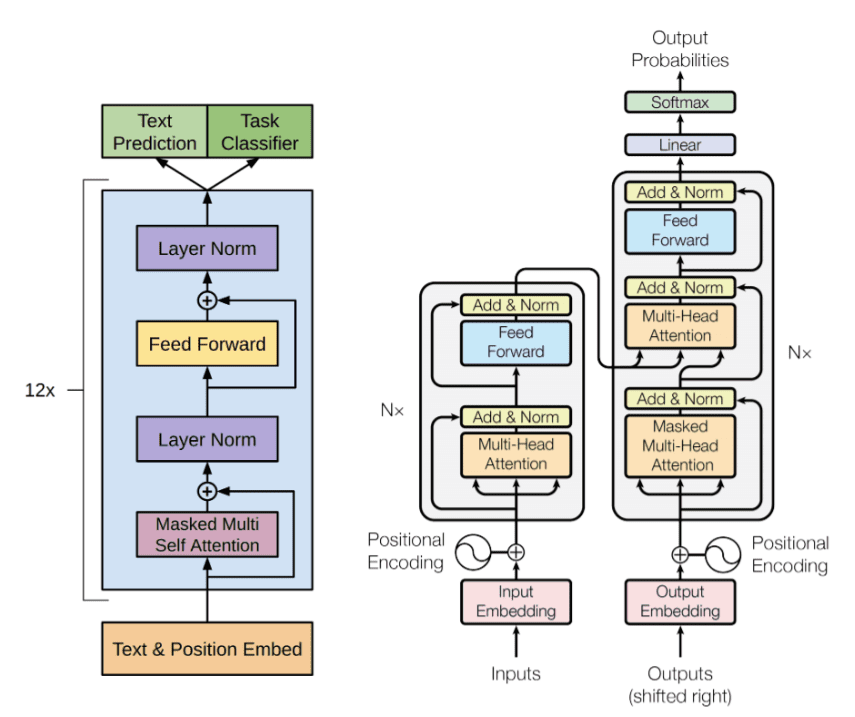

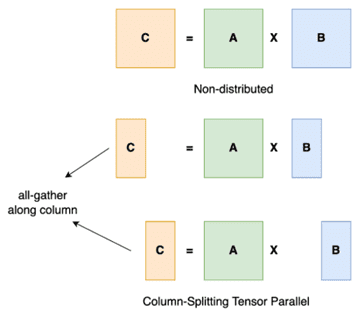

Doesn’t it seem like it was only yesterday that we woke up and, suddenly, ChatGPT had swept in and captured all our minds? As COOs/Operations Executives, we are learning a new lexicon of words such as Large Language Models (LLMs), Artificial Narrow Intelligence (ANI), Artificial General Intelligence (AGI), Artificial Superintelligence (ASI), Natural Language Processing (NLP), deep learning neural networks, and even tensor parallelism. https://research.aimultiple.com/large-language-model-training/

It all seems so overwhelming. Where do we go from here?

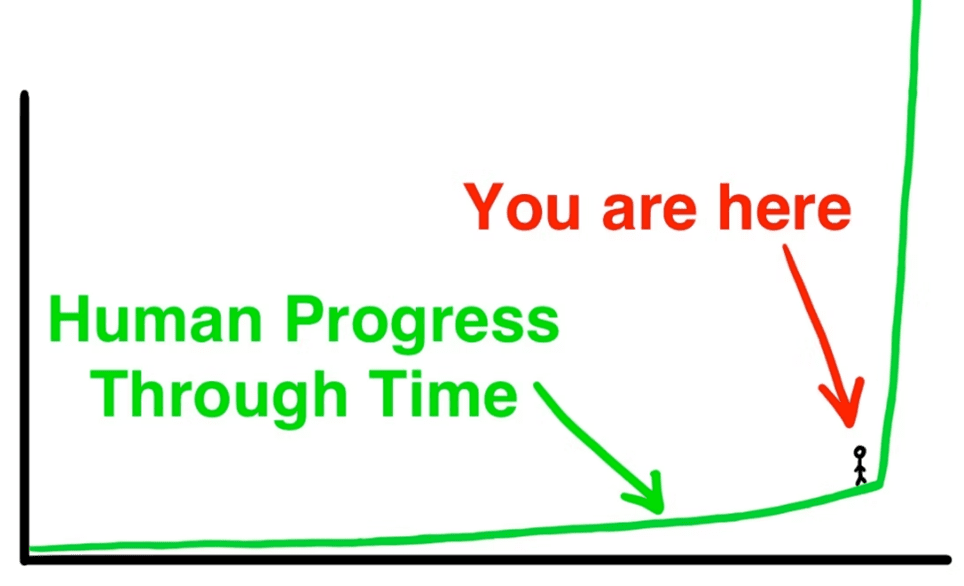

See Tim Urban’s, The AI Revolution: The Road to Superintelligence https://waitbutwhy.com/2015/01/artificial-intelligence-revolution-1.html, for a wonderful recap of human intelligence from past to present - to future. Before we decide where we’re going, however, let’s take a brief look at where we’ve been.

In his article, Tim Urban highlights human history’s Law of Accelerating Returns. It states that “progress in our human history moves quicker and quicker as time goes on because more advanced societies have the ability to progress at a faster rate than less advanced societies.” In other words, if you took a person from the year 1500 and placed them in the year 1750, they might be surprised by what they saw but it would be far less disconcerting than going from 1750 to 2015 (both about 250 years apart).

Now imagine…

You were born in 1971…before the personal computer; when a “mobile phone” meant walking down to the corner to use a pay phone.

Fast forward to 1987…you saw the battle of VHS and Betamax come to an end; you bought your first PC, a Commodore 64, and started a user’s group. (Guilty! But, for the record, we had almost 50 paying members of the Beaver County Area Commodore User’s Group that year.) Plus…there was no internet.

A person born in 1971 has seen as much progress in the last 50 years as a person would have seen from the years 1500 to 1750. That is the Law of Accelerating Returns.

What will it look like in another 20 years? Probably mind blowing.

So, what does the COO Forum® have to do with AI and ChatGPT?

Maybe it’s no coincidence that we were founded in the heart of Silicon Valley by entrepreneur Bill Shepard in 2004. We really do function like the LLMs with input embedding, multi-head self-attention, feed-forward network with normalization and residual connections.

Wait, what?

Question:

If, according to Cem Dilmefani (AI Multiple, 2023), “a large language model is a type of machine learning model that is trained on a large corpus of text data to generate outputs for various natural language processing (NLP) tasks such as text generation, question-answering and machine translation“…then what is a peer-based professional development organization like the COO Forum®?

I would contend that the COO Forum® was the original COO ChatGPT!

With ChatGPT, you type questions into a faceless computer, relying on answers generated from an AI language model trained on a large body of text sources across the internet, including Wikipedia. Yikes!

At the COO Forum®, you have an actual conversation with the source data. I’m talking about a genuine, breathing person who gained experience by living the problem and emerging with useful insights. In fact, our multi-nodal datasets…er, members…are continually learning, which feeds back into our training datasets…uh, new insights. This means our COOs/Operations Executives always get the latest information, techniques and insights in real time.

If this isn’t a perfect case of tensor parallelism, I don’t know what is. But I digress.

So, where do Operations Executives go from here?

Time will tell.

But one thing is for sure. You’d better get your tensor parallelism in alignment with the right datasets, or you’ll be dropping results from an arbitrary MLP (Multilayer Perceptron) in no time!

If you would like to see what the world’s best COOs/Operations Executives are up to, come check us out at www.cooforum.net. We’re always on the lookout for adding new insights to our datasets…I mean, attracting the best and brightest Operations Executives.